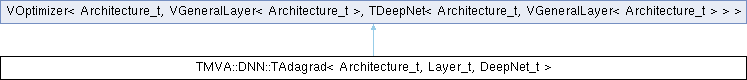

Adagrad Optimizer class.

This class represents the Adagrad Optimizer.

Public Types | |

| using | Matrix_t = typename Architecture_t::Matrix_t |

| using | Scalar_t = typename Architecture_t::Scalar_t |

Public Member Functions | |

| TAdagrad (DeepNet_t &deepNet, Scalar_t learningRate=0.01, Scalar_t epsilon=1e-8) | |

| Constructor. | |

| ~TAdagrad ()=default | |

| Destructor. | |

| Scalar_t | GetEpsilon () const |

| Getters. | |

| std::vector< std::vector< Matrix_t > > & | GetPastSquaredBiasGradients () |

| std::vector< Matrix_t > & | GetPastSquaredBiasGradientsAt (size_t i) |

| std::vector< std::vector< Matrix_t > > & | GetPastSquaredWeightGradients () |

| std::vector< Matrix_t > & | GetPastSquaredWeightGradientsAt (size_t i) |

Protected Member Functions | |

| void | UpdateBiases (size_t layerIndex, std::vector< Matrix_t > &biases, const std::vector< Matrix_t > &biasGradients) override |

| Update the biases, given the current bias gradients. | |

| void | UpdateWeights (size_t layerIndex, std::vector< Matrix_t > &weights, const std::vector< Matrix_t > &weightGradients) override |

| Update the weights, given the current weight gradients. | |

Protected Attributes | |

| Scalar_t | fEpsilon |

| The Smoothing term used to avoid division by zero. | |

| std::vector< std::vector< Matrix_t > > | fPastSquaredBiasGradients |

| The sum of the square of the past bias gradients associated with the deep net. | |

| std::vector< std::vector< Matrix_t > > | fPastSquaredWeightGradients |

| The sum of the square of the past weight gradients associated with the deep net. | |

| std::vector< std::vector< Matrix_t > > | fWorkBiasTensor |

| working tensor used to keep a temporary copy of bias or bias gradients | |

| std::vector< std::vector< Matrix_t > > | fWorkWeightTensor |

| working tensor used to keep a temporary copy of weights or weight gradients | |

#include <TMVA/DNN/Adagrad.h>

| using TMVA::DNN::TAdagrad< Architecture_t, Layer_t, DeepNet_t >::Matrix_t = typename Architecture_t::Matrix_t |

| using TMVA::DNN::TAdagrad< Architecture_t, Layer_t, DeepNet_t >::Scalar_t = typename Architecture_t::Scalar_t |

| TMVA::DNN::TAdagrad< Architecture_t, Layer_t, DeepNet_t >::TAdagrad | ( | DeepNet_t & | deepNet, |

| Scalar_t | learningRate = 0.01, | ||

| Scalar_t | epsilon = 1e-8 ) |

|

default |

Destructor.

|

inline |

|

inline |

|

inline |

|

inline |

|

inline |

|

overrideprotected |

|

overrideprotected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |